Azure Blob Storage: 7 Powerful Benefits You Can’t Ignore

If you’re dealing with massive amounts of unstructured data, Azure Blob Storage is your ultimate cloud solution. Scalable, secure, and cost-effective, it’s the go-to choice for businesses leveraging Microsoft’s cloud ecosystem.

What Is Azure Blob Storage?

Azure Blob Storage is Microsoft’s object storage solution for the cloud, designed to store vast amounts of unstructured data such as text, images, videos, logs, and backups. Unlike traditional file systems, Blob Storage organizes data into containers and blobs, making it ideal for modern cloud-native applications.

Understanding Unstructured Data

Unstructured data refers to information that doesn’t conform to a predefined data model or format. This includes media files, sensor data, social media content, and more. Azure Blob Storage excels at handling this type of data efficiently.

- Examples: PDFs, MP4s, JSON files, CSV exports

- Challenges: Scalability, retrieval speed, metadata management

- Solution: Object-based storage with REST APIs

“Azure Blob Storage provides durable, highly available, and scalable cloud storage for unstructured data.” — Microsoft Azure Documentation

Core Components of Blob Storage

The architecture of Azure Blob Storage revolves around three main components: storage accounts, containers, and blobs. Each plays a critical role in organizing and accessing your data.

- Storage Account: The top-level namespace for your data, providing a unique address (e.g., https://mystorage.blob.core.windows.net).

- Container: A logical grouping of blobs, similar to a folder but without nested hierarchies.

- Blob: The actual data object, which can be a block blob, append blob, or page blob.

Each blob has a unique URL composed of the storage account name, container name, and blob name, enabling direct HTTP/HTTPS access.

Types of Blobs in Azure Blob Storage

Azure supports three types of blobs, each optimized for specific use cases. Choosing the right type ensures optimal performance and cost-efficiency.

Block Blobs

Block blobs are ideal for storing text and binary files, including documents, images, and videos. They are composed of blocks, each up to 100 MB in size, with a maximum blob size of 190.7 TiB.

- Best for: Static content delivery (e.g., website assets)

- Use case: Uploading large video files in chunks

- Feature: Blocks can be uploaded in parallel and committed in order

For developers, block blobs support operations like Put Block and Put Block List, enabling resumable uploads and efficient large file transfers. Learn more about block blob operations on the official Microsoft documentation.

Append Blobs

Append blobs are optimized for append operations, making them perfect for logging scenarios where data is continuously added to the end of the file.

- Best for: Log files, telemetry data, event streams

- Limitation: Maximum of 50,000 blocks per blob

- Benefit: Efficient writes without rewriting the entire file

Each block in an append blob is limited to 4 MB, and once written, cannot be modified—only appended. This immutability ensures data integrity in logging workflows.

Page Blobs

Page blobs are designed for random read/write operations and are primarily used to store virtual hard disks (VHDs) for Azure Virtual Machines.

- Best for: VHDs, database files, disk images

- Size: Up to 8 TiB per blob

- Granularity: 512-byte pages that can be updated individually

Because page blobs support frequent updates to specific parts of a file, they are essential for I/O-intensive applications. They also underpin Azure Managed Disks, which simplify VM disk management.

Key Features of Azure Blob Storage

Azure Blob Storage isn’t just about storing data—it’s about doing so intelligently, securely, and efficiently. Its rich feature set makes it a top contender in the cloud storage space.

Scalability and Performance

One of the standout features of Azure Blob Storage is its virtually unlimited scalability. You can store petabytes of data without worrying about capacity limits.

- Automatic scaling: No manual intervention needed as data grows

- High throughput: Supports millions of IOPS per storage account

- Global reach: Data can be accessed from anywhere via HTTPS

Performance tiers (Hot, Cool, Archive) allow you to balance access frequency with cost, ensuring optimal resource utilization.

Data Redundancy and Durability

Data durability is critical in cloud storage. Azure Blob Storage offers multiple redundancy options to protect against hardware failures and regional outages.

- LRS (Locally Redundant Storage): Copies data three times within a single data center.

- GRS (Geo-Redundant Storage): Replicates data to a secondary region hundreds of miles away.

- RA-GRS (Read-Access Geo-Redundant Storage): Allows read access to data in the secondary region during outages.

With GRS, Microsoft guarantees 99.999999999% (11 nines) durability over a year, meaning your data is extremely unlikely to be lost.

“Azure storage replicates your data at least three times within a single data center for protection against hardware failures.” — Azure Architecture Center

Security and Access Control

Security is baked into Azure Blob Storage at every level, from encryption to identity management.

- Encryption: Data is encrypted at rest using AES-256 or customer-managed keys via Azure Key Vault.

- Authentication: Uses Azure Active Directory (Azure AD), shared access signatures (SAS), or account keys.

- Network Security: Supports virtual networks, firewalls, and private endpoints to restrict access.

For compliance, Azure Blob Storage meets standards like GDPR, HIPAA, and ISO 27001, making it suitable for regulated industries.

Performance Tiers in Azure Blob Storage

To optimize cost and performance, Azure offers three primary access tiers: Hot, Cool, and Archive. Choosing the right tier depends on how frequently you access your data.

Hot Access Tier

The Hot tier is designed for data that is accessed frequently. It offers the lowest access costs but higher storage costs compared to other tiers.

- Best for: Active data, frequently viewed media, transactional logs

- Latency: Low, ideal for real-time applications

- Pricing: Higher storage cost, lower retrieval cost

This tier is perfect for web applications serving images or videos directly from Blob Storage via Content Delivery Networks (CDNs).

Cool Access Tier

The Cool tier is for data that is infrequently accessed but requires quick retrieval when needed. It’s a cost-effective option for backup and disaster recovery data.

- Best for: Backup files, older logs, infrequently accessed documents

- Minimum storage duration: 30 days

- Retrieval cost: Slightly higher than Hot tier

While storage costs are lower, accessing data incurs a small fee, so it’s not ideal for high-frequency reads.

Archive Access Tier

The Archive tier offers the lowest storage cost but is designed for long-term retention with rare access. Retrieval can take several hours.

- Best for: Compliance archives, historical records, legal data

- Retrieval options: Standard (5–12 hours), High Priority (1–5 hours)

- Minimum duration: 180 days; early deletion incurs fees

Despite slow retrieval, the Archive tier can reduce storage costs by up to 90% compared to the Hot tier, making it ideal for regulatory archiving.

How to Use Azure Blob Storage: Practical Steps

Getting started with Azure Blob Storage is straightforward. Whether you’re a developer or an IT administrator, you can set up and manage storage quickly using various tools.

Creating a Storage Account

The first step is creating a storage account in the Azure portal.

- Navigate to the Azure portal and select “Storage accounts”

- Click “Create” and choose a unique name, region, and performance tier

- Select redundancy (LRS, GRS, etc.) and enable features like encryption and versioning

Once created, your storage account provides endpoints for Blob, Queue, Table, and File services.

Uploading and Managing Blobs

You can upload blobs using the Azure portal, Azure CLI, PowerShell, or SDKs in languages like Python, .NET, and Node.js.

- Azure Portal: Drag-and-drop interface for small files

- Azure Storage Explorer: Free GUI tool for managing blobs across subscriptions

- SDKs: Programmatic control for automation and integration

For example, using the Azure CLI:

az storage blob upload --account-name mystorage --container-name mycontainer --name example.txt --file example.txtThis command uploads a local file to a specified container. You can also set metadata, access tiers, and permissions during upload.

Setting Access Policies and SAS Tokens

To securely share blobs without exposing account keys, use Shared Access Signatures (SAS).

- SAS tokens grant time-limited access to specific resources

- Can be configured for read, write, delete, or list permissions

- Supports IP restrictions and protocol enforcement (HTTPS only)

For example, generating a SAS URL in the portal allows a user to download a blob for 24 hours without full account access.

Integration with Other Azure Services

Azure Blob Storage doesn’t exist in isolation—it integrates seamlessly with other Azure services to enhance functionality and streamline workflows.

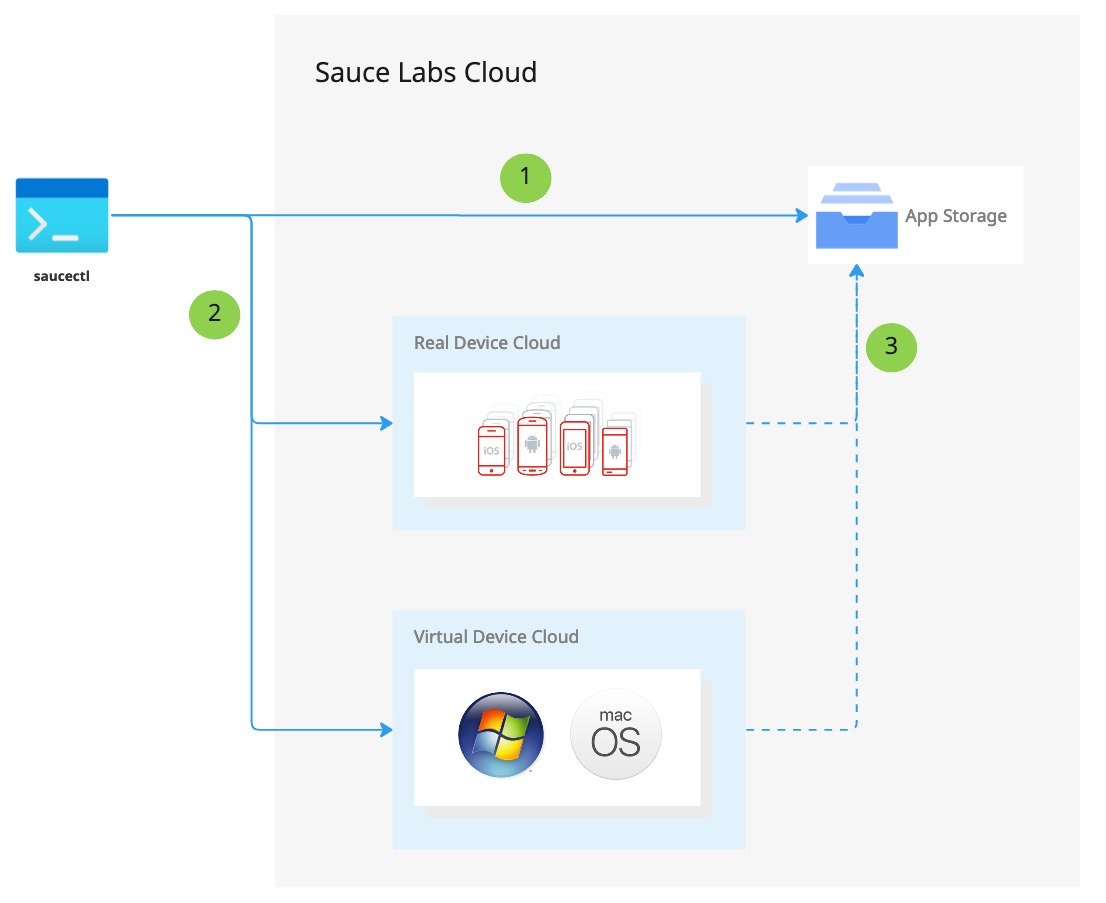

Azure Functions and Blob Triggers

Azure Functions can be triggered automatically when a new blob is uploaded, enabling event-driven architectures.

- Use case: Automatically process uploaded images (resize, compress)

- Benefit: Serverless computing reduces infrastructure overhead

- Setup: Configure a blob trigger in your function app

This integration is perfect for building responsive, scalable data pipelines without managing servers.

Azure Logic Apps and Data Workflows

Logic Apps allow you to automate business processes by connecting Blob Storage to other services like Office 365, Salesforce, or SQL Database.

- Scenario: When a new invoice PDF is uploaded, extract data and save to a database

- Tool: Use the “When a blob is added or modified” trigger

- Advantage: No-code/low-code automation for non-developers

This makes Azure Blob Storage a central hub in enterprise integration scenarios.

Azure Data Lake and Analytics

For big data analytics, Azure Blob Storage can serve as a data lake foundation, especially when combined with Azure Data Lake Storage Gen2.

- Feature: Hierarchical namespace for folder-like organization

- Integration: Works with Azure Databricks, HDInsight, and Synapse Analytics

- Benefit: Enables large-scale data processing and machine learning

Data Lake Storage Gen2 builds on Blob Storage, adding file system semantics for advanced analytics workloads.

Cost Optimization Strategies for Azure Blob Storage

While Azure Blob Storage is cost-effective, unmanaged usage can lead to unexpected bills. Implementing cost optimization strategies ensures you get the most value.

Leverage Lifecycle Management

Azure Blob Storage lifecycle management automates the transition of data between tiers based on rules.

- Create rules to move blobs from Hot to Cool after 30 days

- Archive data after 90 days of inactivity

- Delete temporary files after 1 year

This reduces manual effort and ensures data is always in the most cost-effective tier.

“Lifecycle management helps minimize costs by automatically moving data to the appropriate tier.” — Microsoft Azure Best Practices

Monitor Usage with Azure Cost Management

Use Azure Cost Management + Billing to track storage consumption and identify cost trends.

- Set up budgets and alerts for unexpected spikes

- Analyze spending by resource, department, or tag

- Export reports for financial auditing

Tagging resources (e.g., “Environment=Production”, “Project=Marketing”) enables granular cost tracking.

Use Azure Reserved Capacity

For predictable, long-term workloads, Azure Reserved Capacity offers significant discounts on storage costs.

- Commit to 1 or 3 years of storage usage

- Save up to 65% compared to pay-as-you-go pricing

- Applies to standard storage accounts in specific regions

This is ideal for organizations with stable data growth and compliance archiving needs.

Best Practices for Securing Azure Blob Storage

Security should never be an afterthought. Following best practices ensures your data remains protected from unauthorized access and breaches.

Enable Soft Delete and Versioning

Soft delete protects against accidental deletion by retaining deleted blobs for a configurable period (up to 365 days).

- Recover deleted blobs without backups

- Works with snapshots for point-in-time recovery

- Essential for ransomware protection

Versioning automatically maintains previous versions of a blob, allowing rollback to any state.

Use Private Endpoints and Firewalls

To prevent public internet exposure, configure network security settings.

- Set up private endpoints to access Blob Storage over Azure Private Link

- Restrict access to specific IP ranges using firewall rules

- Disable public network access for maximum security

This is crucial for environments handling sensitive or regulated data.

Implement Role-Based Access Control (RBAC)

Instead of using account keys, assign granular permissions using Azure RBAC.

- Roles: Storage Blob Data Reader, Contributor, Owner

- Principle of least privilege: Grant only necessary permissions

- Integration with Azure AD for centralized identity management

RBAC improves auditability and reduces the risk of credential misuse.

What is Azure Blob Storage used for?

Azure Blob Storage is used for storing unstructured data like images, videos, backups, logs, and large datasets. It’s ideal for web content delivery, data archiving, backup solutions, and integration with big data analytics platforms.

How much does Azure Blob Storage cost?

Pricing depends on the access tier (Hot, Cool, Archive), redundancy option, and data volume. The Hot tier starts at around $0.018 per GB/month with LRS, while the Archive tier can be as low as $0.00099 per GB/month. Additional costs apply for operations and data transfer.

Can I access Azure Blob Storage from on-premises applications?

Yes, you can access Azure Blob Storage from on-premises applications using REST APIs, SDKs, or tools like AzCopy and Azure Storage Explorer. Secure access can be ensured via SAS tokens, private endpoints, or hybrid connections.

What is the difference between Azure Blob Storage and Azure Data Lake Storage?

Azure Blob Storage is a general-purpose object store, while Azure Data Lake Storage Gen2 adds a hierarchical file system on top of Blob Storage, optimized for big data analytics. Both share the same underlying infrastructure, but Data Lake supports directory structures and advanced ACLs.

How do I migrate data to Azure Blob Storage?

Data can be migrated using tools like AzCopy, Azure Data Box for large-scale transfers, or Azure Migrate. You can also use third-party tools or write custom scripts using Azure SDKs to automate the process.

In conclusion, Azure Blob Storage is a powerful, flexible, and secure solution for managing unstructured data in the cloud. From scalable storage and multiple access tiers to deep integration with Azure services and robust security features, it empowers organizations to store, protect, and analyze data efficiently. By leveraging lifecycle policies, monitoring tools, and best practices, you can optimize both performance and cost. Whether you’re building a web application, securing backups, or enabling data analytics, Azure Blob Storage provides the foundation you need to succeed in the cloud era.

Recommended for you 👇

Further Reading: